About Us

Eastside People

Eastside PeopleCase Studies

Case StudiesWhat Our Clients Say

What Our Clients SayOur Partners

Our PartnersEastside Primetimers Foundation

Eastside Primetimers FoundationNews & Insights

News & InsightsContact Us

Contact UsConsultancy

Strategy

StrategyCharity Governance

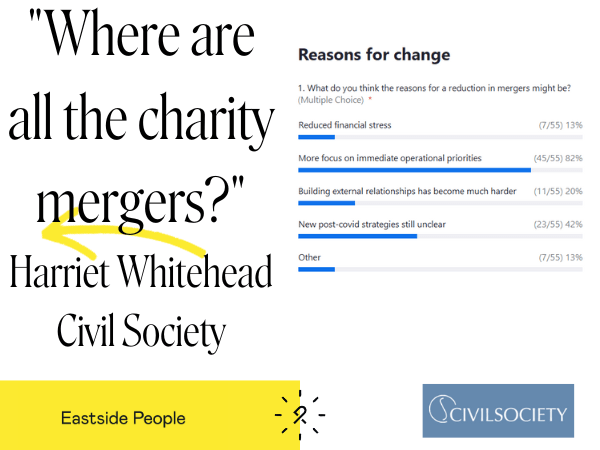

Charity GovernanceCharity Merger & Partnership Services

Charity Merger & Partnership ServicesCharity Income Generation & Fundraising

Charity Income Generation & FundraisingSocial Investment

Social InvestmentCharity Impact Measurement & Evaluation Reports

Charity Impact Measurement & Evaluation ReportsCulture & Workforce

Culture & WorkforceConsultancy Services

Consultancy ServicesOur Consultants

Our ConsultantsRecruitment

Interim Management

Interim ManagementBoard Recruitment

Board RecruitmentExecutive Search

Executive SearchVacancies

VacanciesOur People

Our Culture

Our CultureOur Central Team

Our Central TeamOur Consultants

Our ConsultantsJoin Us

Join UsKnowledge Base